SEARCH

Deepfakes: a weapon of mass deception ahead of global elections

SHARE IT

In 2024, it is estimated that around a quarter of the world's population will go to the polls. This raises concerns about possible misinformation and fraud, which may exploit artificial intelligence. Malicious actors could use these tools to influence election results, and experts are expressing fears about the impact of widespread use of deepfakes.

Nearly two billion people will go to the polls this year to vote for their preferred representatives and leaders. Major elections will be held in many countries, including the US, UK and India. Also in 2024, elections for the European Parliament will be held. These elections may change the political landscape and the direction of geopolitics for years to come - and beyond.

From theory to practice

Worryingly, deepfakes are likely to influence voters. In January 2024, a deepfake audio message from US President Joe Biden was released via robotic call to an unknown number of voters in the New Hampshire primary. The message urged voters not to go to the polls and instead to "save your vote for the November election." The caller ID number that appeared was also spoofed to make it appear that the automated message was sent from the personal number of Kathy Sullivan, former chairwoman of the state Democratic Party, who now heads a committee supporting Joe Biden's candidacy.

It's not hard to see how such calls could be used to deter voters from going to the polls to vote for their preferred candidate ahead of the November presidential election. The risk will be high in contested elections where the shift of a small number of voters from one side to the other determines the outcome. Such a targeted campaign could cause immeasurable damage by affecting a few thousand voters in key states that could decide the outcome of the election.

The threat of deepfake disinformation

Misinformation and disinformation were recently ranked by the World Economic Forum (WEF) as the number one global risk for the next two years. The report warns: "Synthetic content will manipulate individuals, damage economies and disrupt societies in numerous ways over the next two years ... there is a risk that some governments will act too slowly, facing a trade-off between preventing disinformation and protecting freedom of speech."

(Deep)faking it

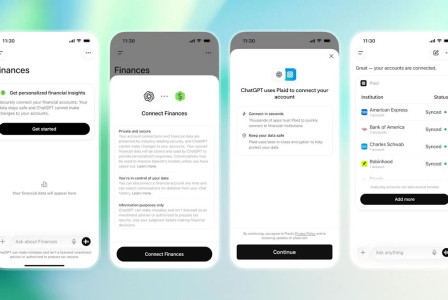

Tools such as ChatGPT and Generative Artificial Intelligence (GenAI) have enabled a wider set of individuals to engage in creating disinformation campaigns using technology. With the help of AI, malicious actors have more time to work on their messages, enhancing their efforts to ensure that their fake content is published and heard.

In the electoral context, deepfakes could obviously be used to erode voters' trust in a particular candidate. After all, it is easier to convince someone not to do something than the opposite. If supporters of a political party or candidate can be properly influenced by fake audio or video, this would give a sure win to opponents. In some cases, rogue states may seek to undermine confidence in the democratic process so that whoever wins will find it difficult to govern.

At the heart of the process is a simple truth: when people process information, they tend to value quantity and ease of understanding. This means that the more content we see with a similar message and the easier it is to understand, the greater the likelihood that we will believe it. This is why marketing campaigns consist of short and constantly repeated messages. Add to this the fact that distinguishing deepfakes from real content is becoming increasingly difficult, and you have a potential recipe for the destruction of democracy.

What are tech companies doing about it

Both YouTube and Facebook were slow to respond to some deepfakes aimed at influencing recent elections. This is despite new European Union legislation (Digital Services Act) that requires social media companies to crack down on attempts to manipulate elections.

For its part, OpenAI said it will apply the Coalition for Content Proofing and Authenticity (C2PA) digital credentials for images generated by DALL-E 3. The cryptographic watermarking technology - also being tested by Meta and Google - is designed to make it harder to produce fake images.

However, these are small steps and there are legitimate concerns that the response to the threat will not be enough and will come late as election fever grips the planet. Especially when deepfakes are spread over relatively closed networks, in WhatsApp groups or via phone calls, it will be difficult to quickly identify and disprove any fake audio or video.

The theory of "anchor bias" suggests that the first information people hear is the one that sticks in their minds, even if it turns out to be false. If deepfakers reach out first to swing voters, no one knows who the ultimate winner will be. In the age of social media and disinformation, the famous 18th century Anglo-Irish political columnist, clergyman and satirical writer Jonathan Swift's quote "the lie gallops and the truth comes panting after it" takes on a whole new meaning.

MORE NEWS FOR YOU

Help & Support

Help & Support