SEARCH

Google introduces RT-2, an AI platform for communicating with robots

SHARE IT

Despite the fact that large language models (LLMs)-based AI chatbots are currently dominating the news thanks to the rapid surge in popularity of ChatGPT, Bing Chat, Meta's Llama, and Google Bard, this is a relatively minor piece of the overall AI landscape. Robotic hardware that uses sophisticated ways to either replace or aid people is another field that has been intensively investigated for years. Recently, Google revealed a new AI model that represents a development in this field.

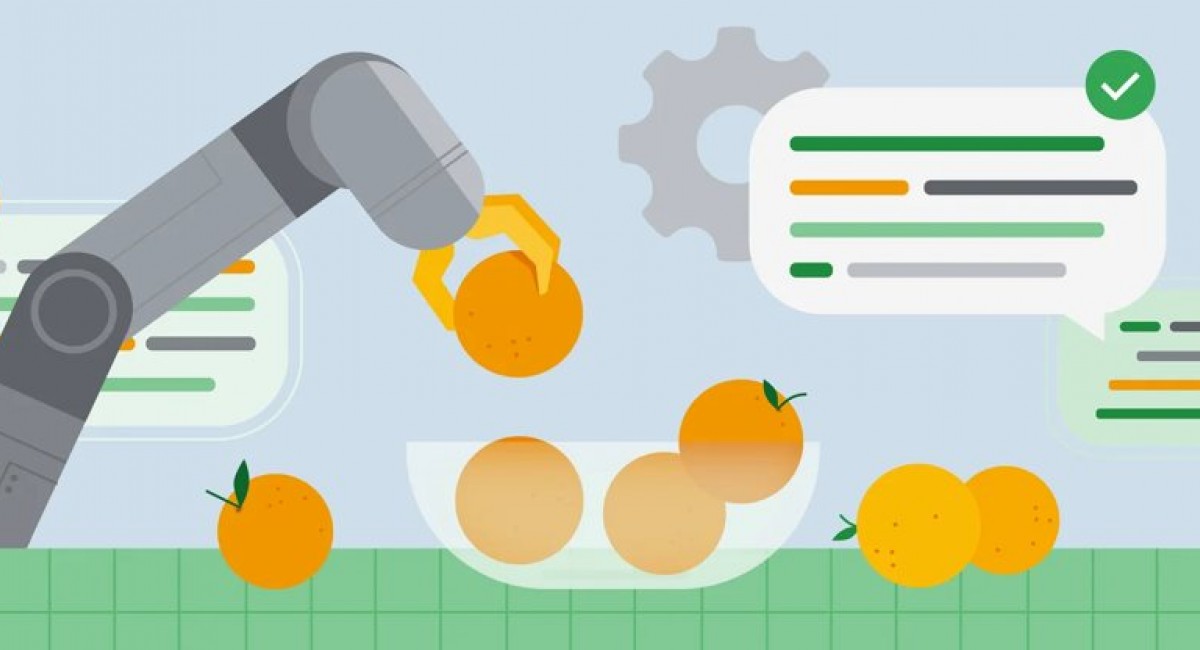

Robotics Transformer 2 (RT-2) is Google's most recent AI model, and it has one very clear goal: telling a robot what you want it to do. It makes use of cutting-edge methods to accomplish this goal and is propelled by a special visual-language-action (VLA) that Google claims to be the first of its type. Even while earlier models like RT-1 and PaLM-E improved on boosting robot reasoning capabilities and ensuring that they learn from one another, the robot-dominated world depicted in science fiction films undoubtedly still looks to be a concept from the very far future.

By ensuring that robots fully comprehend their surroundings with little to no assistance, RT-2 seeks to close the gap between fiction and reality. Using a Transformer-based model, it learns about the environment using textual and visual information available on the web and then translates it into robotic actions, even on test situations where it hasn't explicitly been trained. In this way, it is conceptually very similar to LLMs.

In order to explain the possibilities of RT-2, Google has provided a number of use scenarios. An RT-2 powered robot, for instance, would easily be able to understand what trash is, how to distinguish it from other objects in the environment, how to mechanically move and pick it up, and how to dispose of it in the bin—all without having been specifically trained on either of these tasks.

Google has also released some RT-2 testing data that is rather amazing. In more than 6,000 trials, RT-2 demonstrated that it was just as good at "seen" tasks as its predecessor. More intriguingly, it performed nearly two times better in unseen circumstances, scoring 62% versus RT-1's 32%. Although the uses of such a technology appear to be very real already, it does take some time for it to mature because legitimate use-cases frequently necessitate thorough testing and even regulatory approval.

MORE NEWS FOR YOU

Help & Support

Help & Support