SEARCH

What changes are brought by the AI Act

SHARE IT

At a time when Artificial Intelligence (AI) permeates every aspect of our lives, the European Union is innovating, as the first global legislator to attempt to regulate AI. The European AI Regulation (AI Act), with direct effect for Member States, was adopted on 13 March by the European Parliament. This new legislation will come into force towards the end of May, giving businesses 6-24 months to adapt. Fines for non-compliance can reach up to €35 million or 7% of global gross trading turnover.

Overview of the AI Act

The AI Act takes a risk-based approach to regulating AI systems based on the risks they pose to society and people. This framework aims to ensure that AI systems are developed and used in a way that respects human rights, security concerns, and EU values.

1. Prohibited AI schemes: certain AI applications that are considered to present "excessive risks", such as manipulation techniques or those that exploit vulnerable groups, are completely banned. These schemes must be phased out by the end of 2024.

2. High-risk AI schemes: AI systems that could significantly affect the safety, health or fundamental rights of people must comply with strict requirements before they enter the EU market. This category includes systems in important areas such as health, critical infrastructure, education and labour.

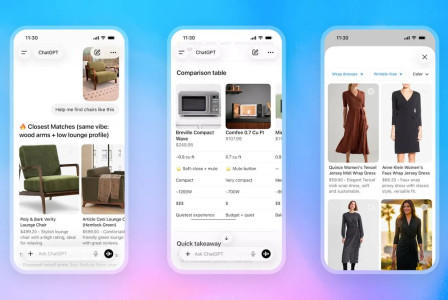

3. Low-risk AI systems: This includes AI applications such as chatbots, which will be required to maintain a level of transparency to inform users that they are interacting with AI.

4. Low risk AI systems: The majority of AI applications, which pose minimal risk, will enjoy the freedom to develop without additional regulatory obligations.

5. General purpose AI systems: The legislation separately addresses general purpose AI systems (general purpose AI systems), which are characterised by their broad capabilities and potential systemic risk, such as the various Generative AI chatbots available on the market.

Next steps for businesses

As the EU prepares for the implementation of the AI Act, businesses should immediately assess their own use and deployment of AI technologies in relation to the new regulatory framework. The next steps for businesses are:

- Systems Mapping: create a registry of the key elements of all systems that fall within the scope of the AI Act, i.e. those defined as "AI Systems". At the same time they will need to assess their role in the AI chain as a user, developer, importer or distributor of such systems.

- Risk assessment: Evaluate AI systems to determine their risk category under the new framework. This categorisation is crucial for planning compliance with the new legislation, as different deadlines apply for each category.

- Analysis of deviations: The analysis of a company's current compliance status in relation to the obligations arising from the PI Act is key and requires the development of an action plan to remedy any deviations.

- Harmonisation and documentation: specifically for high-risk AI systems, companies should prepare for detailed regulatory harmonisation and compliance assessments, with regard to human rights impacts, and prepare the necessary written documentation, including data management and transparency measures.

- Training: establish a comprehensive staff training programme, with differentiated levels of complexity and content.

- Sandbox innovation: Explore potential participation in regulatory sandboxes to test and develop innovative AI solutions in a controlled regulatory environment.

- Ongoing update: Given the evolving nature of AI technology and related legislation, the key to ensuring continued compliance is to keep up to date, both in terms of additional guidance expected from EU institutions or the Greek AI regulator.

MORE NEWS FOR YOU

Help & Support

Help & Support