SEARCH

Meta’s new AI system adapts more quickly to tackle new types of harmful content

SHARE IT

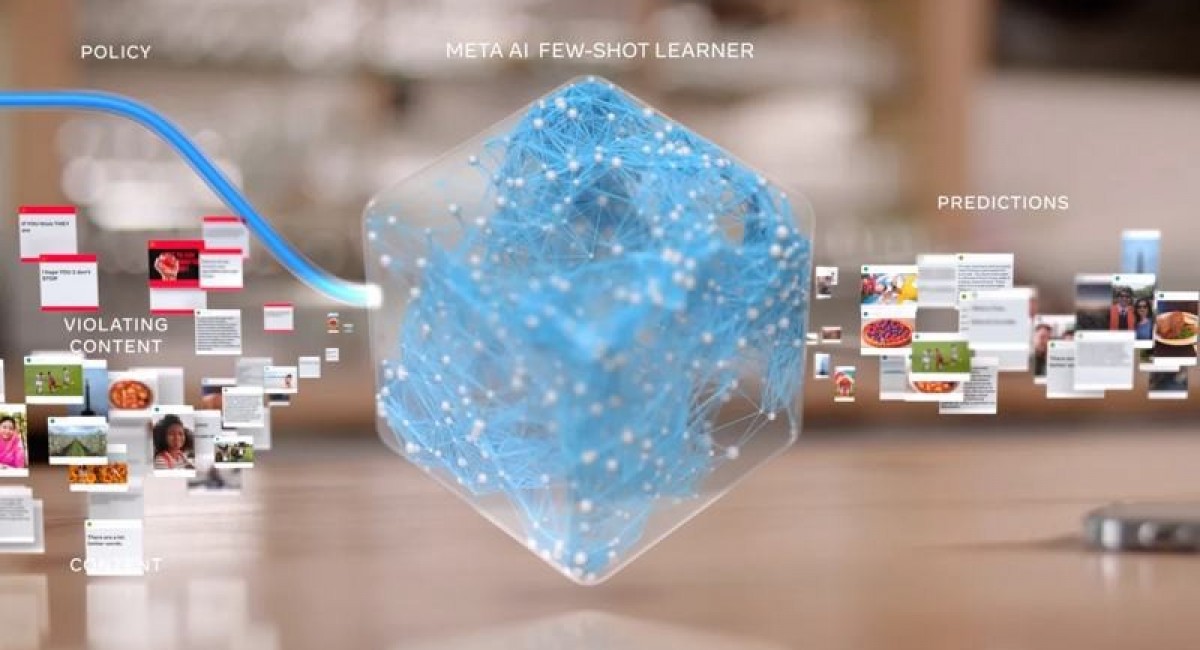

Meta has developed a new artificial intelligence moderation system for some tasks that can adapt to new enforcement assignments far faster than its predecessors since it requires significantly less training data.

Few-Shot Learner could automate enforcement of a new moderation rule in about six weeks. The company claims that the system is helping to enforce a rule introduced in September banning posts likely to discourage people from getting Covid-19 vaccines. The system also contributed to the effort to eliminate hate speech that was first recorded in mid-2020 on the Facebook platform.

The company has said that artificial intelligence technology is the only practical way to keep track of its vast network, but despite recent developments, technology cannot understand the forms of human communication. Facebook said recently that it has automated systems to find hate speech and terrorism content in more than 50 languages.

Few-Shot Learner has been pre-trained in a series of billions of Facebook posts and images in more than 100 languages. The system uses them to build up an internal sense of the statistical patterns of Facebook content. It is tuned for content moderation by additional training with posts or imagery labeled in previous moderation projects and simplified descriptions of the policies those posts breached.

After that preparation, the system can be directed to find new types of content, such as to enforce a new rule or expand into a new language, with much less effort than previous moderation models, says Cornelia Carapcea, a product manager on moderation AI at Facebook.

“Because it’s seen so much already, learning a new problem or policy can be faster,” Carapcea says. “There’s always a struggle to have enough labeled data across the huge variety of issues like violence, hate speech, and incitement; this allows us to react more quickly.”

MORE NEWS FOR YOU

Help & Support

Help & Support