SEARCH

ChatGPT introduces a human connection for users in crisis

SHARE IT

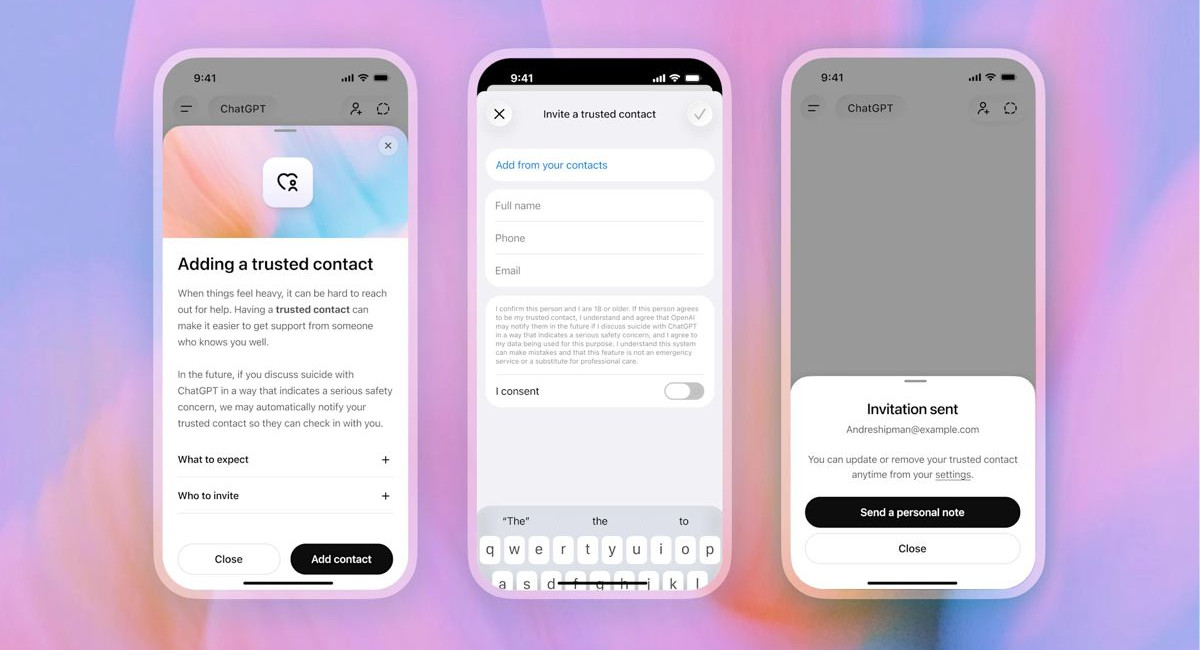

OpenAI has officially launched a new, optional safety feature named Trusted Contact within ChatGPT. Designed specifically for adult users, this tool aims to provide a critical safety net during moments of severe emotional distress or when conversations indicate potential self-harm. By shifting the focus from simple content moderation to active real-world intervention, OpenAI is attempting to address a growing concern: ensuring that vulnerable individuals are not left isolated with an algorithm when they need human empathy the most.

The implementation of Trusted Contact comes at a time of heightened scrutiny for OpenAI. The company has recently faced multiple legal challenges from families alleging that prolonged, unmonitored interactions with ChatGPT contributed to tragic outcomes, including instances where the chatbot supposedly failed to deter or even engagement with dangerous thoughts. While courts have yet to establish legal liability, the pressure on AI developers to safeguard users has never been higher. With this feature, ChatGPT evolves from a passive information provider into an active facilitator of real-world support, acknowledging that AI can never replace professional care or the genuine presence of a loved one.

Operating on a strictly voluntary basis, the system allows eligible individuals over the age of 18 to nominate a single trusted adult, such as a close friend, relative, or dedicated caregiver. Setting up the feature is a deliberate process integrated directly into the ChatGPT account settings. Users must provide the name and email address of their chosen contact, while adding a phone number is highly recommended to ensure rapid communication. Once the details are submitted, the nominee receives an official invitation outlining the nature of the role. To ensure active consent, the feature only becomes operational if the designated contact formally accepts the request within a one-week window.

The underlying mechanics of Trusted Contact balance advanced automated monitoring with crucial human oversight. When a user interacts with ChatGPT, automated internal systems continuously scan the dialogue for red flags related to severe emotional crisis or self-harm. If a significant safety risk is flagged, the platform first prompts the user to seek immediate professional help, offering access to hotlines and emergency resources. Concurrently, instead of relying solely on automated scripts to trigger external alerts, OpenAI routes the flagged conversation to a specialized, trained human review team. These human moderators assess the context of the interaction to determine if the threat is genuine and if outside intervention is warranted.

Should the human review team confirm a serious safety concern, a notification is sent to the user's designated trusted contact via email, text message, or an in-app alert. Privacy remains a paramount consideration in how these alerts are framed. To respect the user's personal boundaries, OpenAI ensures that the trusted contact is never granted access to private chat histories, full transcripts, or screenshots. Instead, the contact receives a brief, generalized message explaining that the user may be experiencing a mental health crisis, alongside guidance on how to check in on them effectively. This approach aims to provide the necessary alert to prompt a real-world welfare check without compromising the digital privacy of the user.

Furthermore, OpenAI has designed the system with flexibility and user autonomy in mind. A user can easily modify or completely remove their designated contact at any time through their settings. Similarly, the trusted contact retains the right to withdraw from the role if they no longer feel capable of providing that level of support. While the feature is currently restricted to personal adult accounts, OpenAI has introduced separate parental controls to address similar safety concerns for younger demographics, ensuring that corporate and educational workspaces remain unaffected by these specific tracking protocols.

Ultimately, Trusted Contact represents a paradigm shift in how artificial intelligence companies approach user welfare. By integrating a mechanism that actively encourages users to step away from the screen and reconnect with their personal support networks, OpenAI acknowledges the limitations of technology in handling profound psychological crises. The tool does not seek to diagnose or treat mental health issues, but rather acts as a digital bridge to real-world relationships. As AI continues to integrate into daily life, initiatives like Trusted Contact emphasize that the most valuable response to human vulnerability is still another human being.

MORE NEWS FOR YOU

Help & Support

Help & Support