SEARCH

Google introduces interactive 3D models and real-time simulations on Gemini

SHARE IT

The artificial intelligence landscape is undergoing yet another transformative shift, and Google is leading the charge by fundamentally changing how users interact with its flagship AI chatbot, Gemini. In a recent rollout that underscores the companys commitment to visual and interactive learning, Google has equipped Gemini with the ability to generate fully interactive 3D models and real-time functional simulations. This monumental upgrade means that users are no longer confined to reading dense walls of text or looking at static, unmoving diagrams when trying to understand complex scientific concepts. Instead, they can now manipulate, explore, and visualize subjects ranging from celestial mechanics to the intricate world of quantum physics, all within the chat interface.

For years, digital search and AI assistance have relied heavily on traditional methods of information delivery. Whenever a user asked a complex question about physics or biology, the best they could hope for was a detailed paragraph accompanied by a two-dimensional illustration. Google is breaking this mold by turning Gemini into a virtual laboratory. By simply supplying a well-crafted prompt, anyone can conjure up functional simulations that react to user inputs. This dynamic approach essentially bridges the gap between passive reading and active, hands-on learning, making it a game-changer for curious minds, hobbyists, and lifelong learners who want to grasp the mechanics of the universe in a more profound way.

One of the most fascinating applications of this new feature is the ability to simulate astrophysics right on your screen. For instance, if you want to understand the gravitational relationship between celestial bodies, you can ask Gemini to model how the moon orbits the Earth. Rather than just giving you an orbital path on a flat plane, the AI generates a simulation complete with manual sliders. These sliders allow you to play the role of a cosmic architect, adjusting variables such as initial velocity and gravity strength. As you tweak these parameters, you can watch in real-time how the changes either stabilize the orbit or send the moon careening out into the depths of space. It is a level of interactive engagement that previously required specialized educational software.

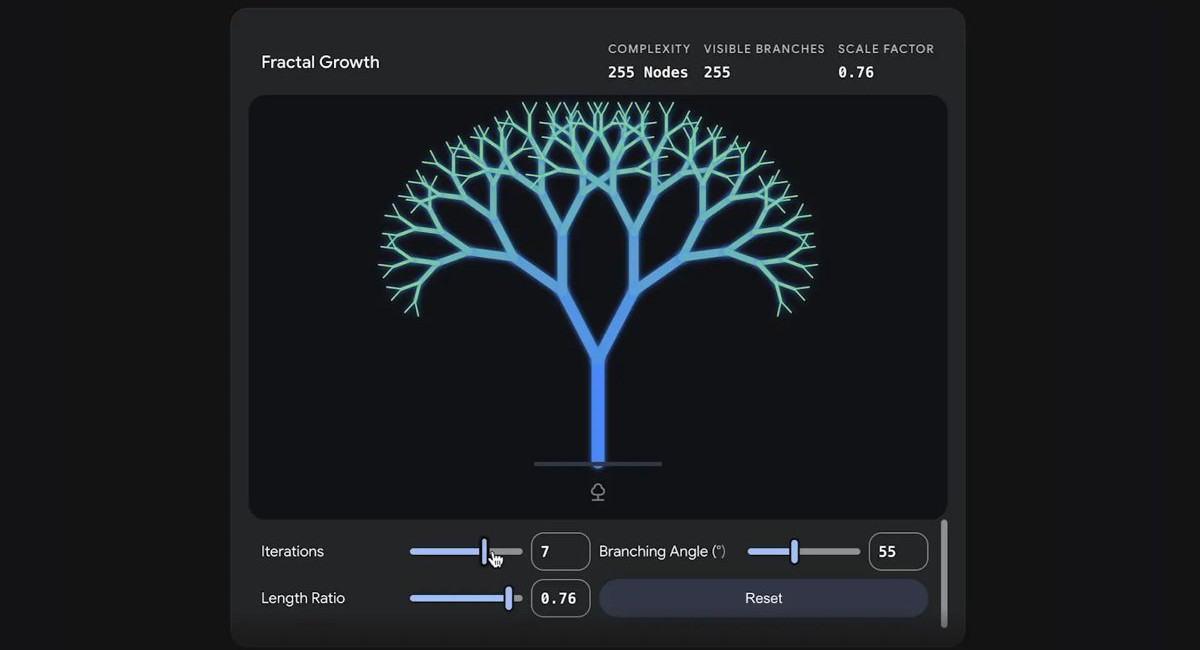

To unlock these capabilities, users simply need to adopt a specific prompting style. Initiating a request with phrases like Show me or Help me visualize signals to the chatbot that a visual, interactive output is desired. It is important to note that this functionality currently requires the Pro model of Gemini to execute effectively. Once activated, the possibilities are vast. A user could type a prompt asking to visualize how fractals work. Gemini will then produce a dynamic representation of fractal growth, providing adjustable attributes for the branching angle, length ratio, and the number of iterations. Watching the mathematical beauty of a fractal unfold and change based on your own inputs is both mesmerizing and highly educational.

The realm of quantum physics also gets a major accessibility boost through these new tools. Concepts that are notoriously difficult to conceptualize, such as the famous double slit experiment, can now be explored intuitively. By asking Gemini to show how this experiment works, users receive an interactive visualization where they can alter the wavelength, adjust the wave speed, and change the separation between the slits. Observing how the interference patterns shift and transform in real-time provides a concrete understanding of wave-particle duality that textbooks simply cannot match. This hands-on approach demystifies high-level science, making it approachable for the general public.

According to a recent blog post by Google, this powerful feature is currently rolling out to the general user base. However, there is a catch for certain institutional users: those accessing the platform via Education and Workspace accounts do not currently have access to these interactive 3D simulations. Despite this limitation, the rollout represents a significant leap forward. It builds seamlessly upon the educational foundations Google laid last year, when they introduced clickable, interactive diagrams for subjects like Biology, Chemistry, Physics, and Mathematics. Those earlier diagrams allowed users to click on specific components to reveal deeper explanations and sub-topics, setting the stage for the fully functional simulations we see today.

Ultimately, the integration of interactive 3D models into Gemini is a testament to the evolving role of artificial intelligence in our daily lives. AI is no longer just a tool for generating text, drafting emails, or answering basic trivia. It is rapidly becoming an immersive educational companion that can adapt to our learning styles and provide experiential knowledge. By transforming abstract data into tangible, manipulatable simulations, Google is ensuring that the future of information discovery is not just informative, but inherently visual, deeply engaging, and undeniably fun

MORE NEWS FOR YOU

Help & Support

Help & Support