SEARCH

Meta opens the AI looking glass for parents

SHARE IT

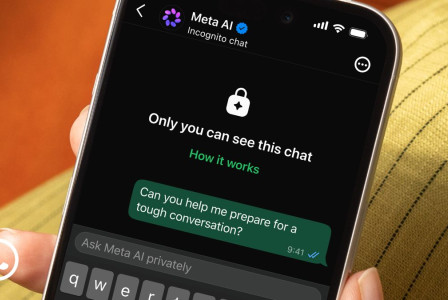

Meta is taking a significant step toward bridging the gap between adolescent privacy and parental oversight. The tech giant has officially introduced a new suite of supervision tools designed to give parents a clearer window into how their teenagers are interacting with Meta AI across its dominant platforms: Facebook, Instagram, and Messenger.

The cornerstone of this update is the new Insights tab, a dedicated dashboard that populates with the various subjects a teen has explored with the AI assistant over the rolling period of the last seven days. Rather than providing a verbatim transcript—which would likely trigger backlash over privacy and trust—Meta has opted for a categorical approach. Parents will see broad labels such as School, Entertainment, Travel, or Health and Wellbeing. By tapping on these headings, they can delve into more specific sub-categories; for instance, a general interest in "Lifestyle" might reveal a teen’s curiosity about fashion, food, or upcoming holidays.

This move is strategically positioned as a middle ground. Meta is essentially offering a thematic map of a teenager's digital curiosity without exposing the raw, and often deeply personal, prompts they might send to a chatbot. According to Meta, the goal is to foster transparency and provide a foundation for real-world dialogue. To facilitate this, the company has collaborated with the Cyberbullying Research Center to develop a series of conversation starters, helping parents navigate the potentially awkward transition from digital monitoring to a face-to-face talk about responsible AI use.

The safety measures go beyond simple topic tracking. Meta has integrated stricter guardrails for its AI when interacting with minors, drawing inspiration from PG-13 movie ratings to ensure responses remain age-appropriate. Furthermore, the company is doubling down on mental health protections. While the current system shows if a teen is researching health-related topics, Meta is actively developing a specialized alert system. This upcoming feature will proactively notify parents if a teenager attempts to engage the AI in discussions regarding self-harm or suicide—topics where the AI is already programmed to refrain from conversation and instead provide links to professional support resources.

However, the rollout is not without its critics. While many parents welcome the visibility, some child safety advocates and sociologists argue that such tools could inadvertently create a culture of surveillance that stifles a teenager's sense of autonomy. There is also the concern that in high-conflict households, this level of oversight could be misused. Despite these debates, Meta is moving forward with a global vision. The feature is currently live for supervised Teen Accounts in the US, UK, Canada, Australia, and Brazil, with a worldwide expansion slated for the coming weeks.

As AI continues to evolve from a novelty into a daily utility for the younger generation, Meta’s latest initiative highlights the industry’s ongoing struggle: how to encourage innovation and exploration while maintaining a safety net that satisfies both regulators and concerned families. For now, the Insights tab stands as Meta’s answer to the "black box" of AI interactions, promising parents a seat at the table in their children's digital lives.

MORE NEWS FOR YOU

Help & Support

Help & Support